When we launched the Gathering for a Just Recovery, we vowed to make it more accessible than anything we’ve ever done before. Before we had 10,000 pre-registrants and before we had over 100 partners and before we had organized over 200 workshop sessions, we needed to start with the basics. We vowed to educate ourselves so we could learn quickly on what an online conference could do, at scale, with the tools that exist now.

Unfortunately, the technology of online conference systems reflects the ableist culture we swim in. The online engineers assumed people could see. The salespeople assumed everyone could understand English. The platforms assumed your internet was strong.

When we make a system that excludes people’s experiences, it’s reflected in every aspect. The technology reflected that.

We went through a series of disappointments: we fell in love with (many) interactive features or systems that were great — but exclusive. We let that journey of disappointment grow our empathy — and it left us with a problem to solve. How do you make imperfect things as accessible as possible?

Here’s what we did and what we ended up with.

Ask what the needs are.

To our knowledge, 350 has never asked its people what their accessibility needs are. This creates a self-perpetuating problem: if you never ask, you never find out. Our colleagues with disabilities kept saying, “If nobody tells us it’s accessible for us, we assume it’s not.” Experience tells us that’s typically true.

So we asked a series of questions about people’s language needs, visual needs, auditory needs, and other impairments that might affect the gathering.

A few insights, to give a sense:

- Our highest language requests were for English, Spanish, Portuguese, French, and Turkish — these largely reflect 350’s organizing footprint;

- Closed captioning was a higher demand than we expected — almost 2,000 people. Some people were hearing impaired, most said it helps them understand the English better (an example of how supporting one margin can support others);

- Despite the option, sign language wasn’t requested. Given what we learned about International Sign Language not being an international solution for meetings, this relieved some pressure as we were researching support for multiple sign language variants;

- Support for screen readers and for folks with visual disability was very low (just a handful). We hazard a guess this may be because 350 hasn’t been great at this in the past — so we decided to go ahead and prioritize our support for this as best we can.

Find a system that meets most of the needs.

We tested 30+ different conference systems. It took us a while but we found an online conference platform that was inside our budget range and takes disability access seriously: PheedLoop. (And multilingual… and …)

They’re consistent with WCAG, AODA, and ADA — but that’s a minimum bar.

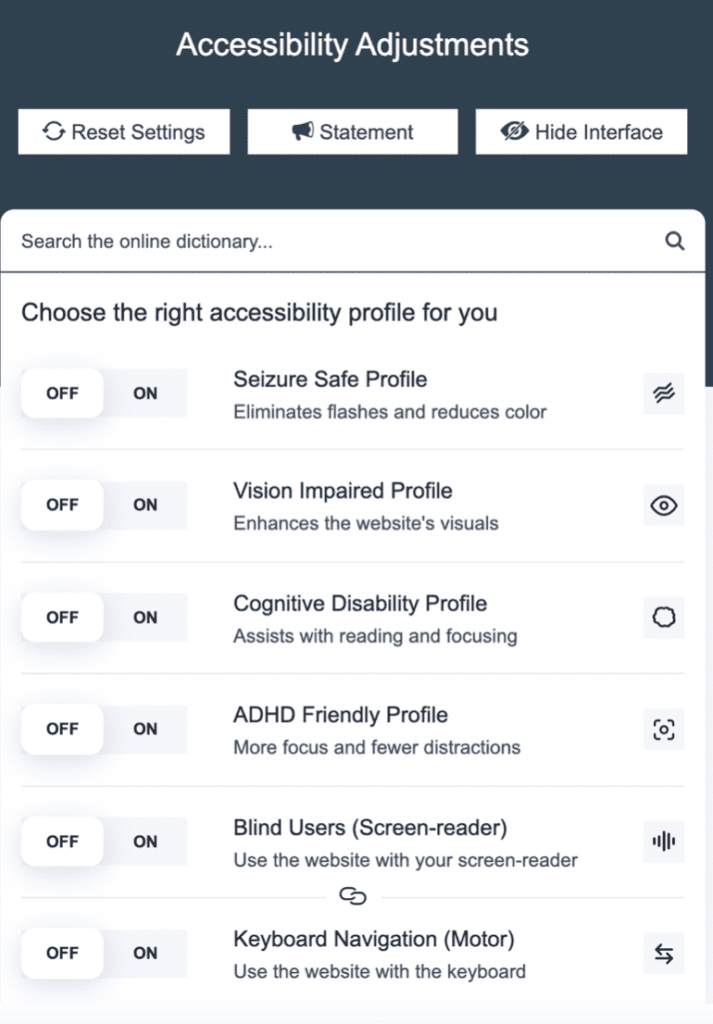

Caption: Image of PheedLoop interface: Title “Accessibility Adjustments” at top with three buttons: “Reset Settings, Statement, and Hide Interface.” Text area for “Search the online dictionary” with a series of on/off buttons offering to “Choose the right accessibility profile for you”. The buttons include: Seizure Safe Profile (eliminates flashes and reduces color), Vision Impaired Profile (enhances the website’s visuals), Cognitive Disability Profile (assists with reading and focusing), ADHD Friendly Profile (more focus and fewer distractions), Blind Users (screen-reader) (use the website with your screen-reader), Keyboard Navigation (motor) (use the website the keyboard).

Above you can find some of the options that PheedLoop supports. They have other options that make it easy to do color shifts, make the font size bigger, and other accessibility options often neglected.

In pre-testing their system was adequate. And that made it far superior to almost everything else we tested.

But that is only the beginning of the challenge. Since we’re hosting over 200 workshops and plenary sessions in a mix of almost a dozen languages, we’ve got to think about how to support captioning and interpretation.

Here is where it began to get really tricky.

Find more systems that expand its capabilities

An additional reason we picked PheedLoop was because they were responsive. We insisted on being multilingual and they responded by adding 12 languages to their services. We offered our translation services and so they added Turkish and Indonesian for us, too.

But PheedLoop alone couldn’t provide everything.

So we added to the mix. Allow us to just get a little nerdy on the details of what we used.

We relied on Zoom for our workshop sessions. This wasn’t an easy choice — but Zoom is known, and folks in the disability community have grown used to what it can and cannot do. And it’s better than many. Zoom also saved our speakers from learning a new system.

We added captions via SyncWords. We loved how SyncWords works smoothly for our plenary sessions. A human hears the language and transcribes the content. Our testing showed this worked smoothly.

Alas, this doesn’t work well with Zoom. Zoom doesn’t (yet) allow you to set up captions ahead of time on a workshop. And with 200 workshops — sometimes 40 simultaneously — we didn’t have the capacity to support the tech required.

For those workshop sessions we set-up Zoom’s auto-captioning feature, where Zoom automatically takes the spoken words in English and puts them into text (unfortunately there is no translation). The automatic function is better than nothing but admittedly imperfect.

Finally, interpretation proved to be quite difficult. We played with several options that didn’t provide what we needed or cost vastly more than we could afford. But we landed on Clevercast, which takes any RTMP (or HLS) feed and sets up rooms that allow multiple interpretation rooms. This worked smoothly with our system, which happens to be live streaming from StreamYard.

All of this we’ve tested multiple times with a range of colleagues to help give feedback on how it all works. We’re pretty pleased with the end result.

The final result: What we can offer

For all the plenary sessions (panels and cultural sessions) we offer:

- Interpretation into the seven most requested languages (English, French, Spanish, Portuguese, Turkish, Indonesian, Japanese – plus German, Chinese and Bengali for some of these sessions)

- Live streams and recorded sessions for later (better for those with low internet connections)

- Live captioning in English by a human translator and those being auto-translated into the languages we had significant requests for captions (Spanish and French). We have also added Korean to some sessions, as this was one language we were not supporting and that we got a fair amount of requests for.

All of the Movement Stories and Workshops, they will be hosted on Zoom. We’ll offer:

- Interpretation as noted under each session description*

- Live captioning via Zoom’s auto-captioning service*

- Prepping our 250+ session leaders to prepare accessible sessions with tips, such as reading slides aloud and adding captions

- For people with visual disabilities, we encourage downloading “Be My Eyes.”

* These options don’t work in break-out rooms.

Because of no demand, we are not offering sign language interpreters. We are unable to support interpretation or captions in languages with less than a dozen people.

A final note

Because these sessions are online, we’ve had to find technology that supports wider accessibility. But technology will never be any equalizer if we never learn to be in each other’s shoes.

Real relationships, real testing, and really thinking it through from many angles — that’s hard work that will not go away as long as we have a system that divides us up.

We’re reporting back what we’re doing here not for kudos, but so people can see us struggling to get better. Those with the enthusiasm to help please keep advising and helping us — and those with frustration, keep prodding us with sticks to get better. A Just Recovery requires all of us.